OMNIZART: MUSIC TRANSCRIPTION MADE EASY¶

Omnizart is a Python library and a streamlined solution for automatic music transcription. This library gathers the research outcomes from Music and Cultural Technology Lab, analyzing polyphonic music and transcribes musical notes of instruments [WCS20], chord progression [CS19], drum events [WWS20], frame-level vocal melody [LS18], note-level vocal melody [HS20], and beat [CS20].

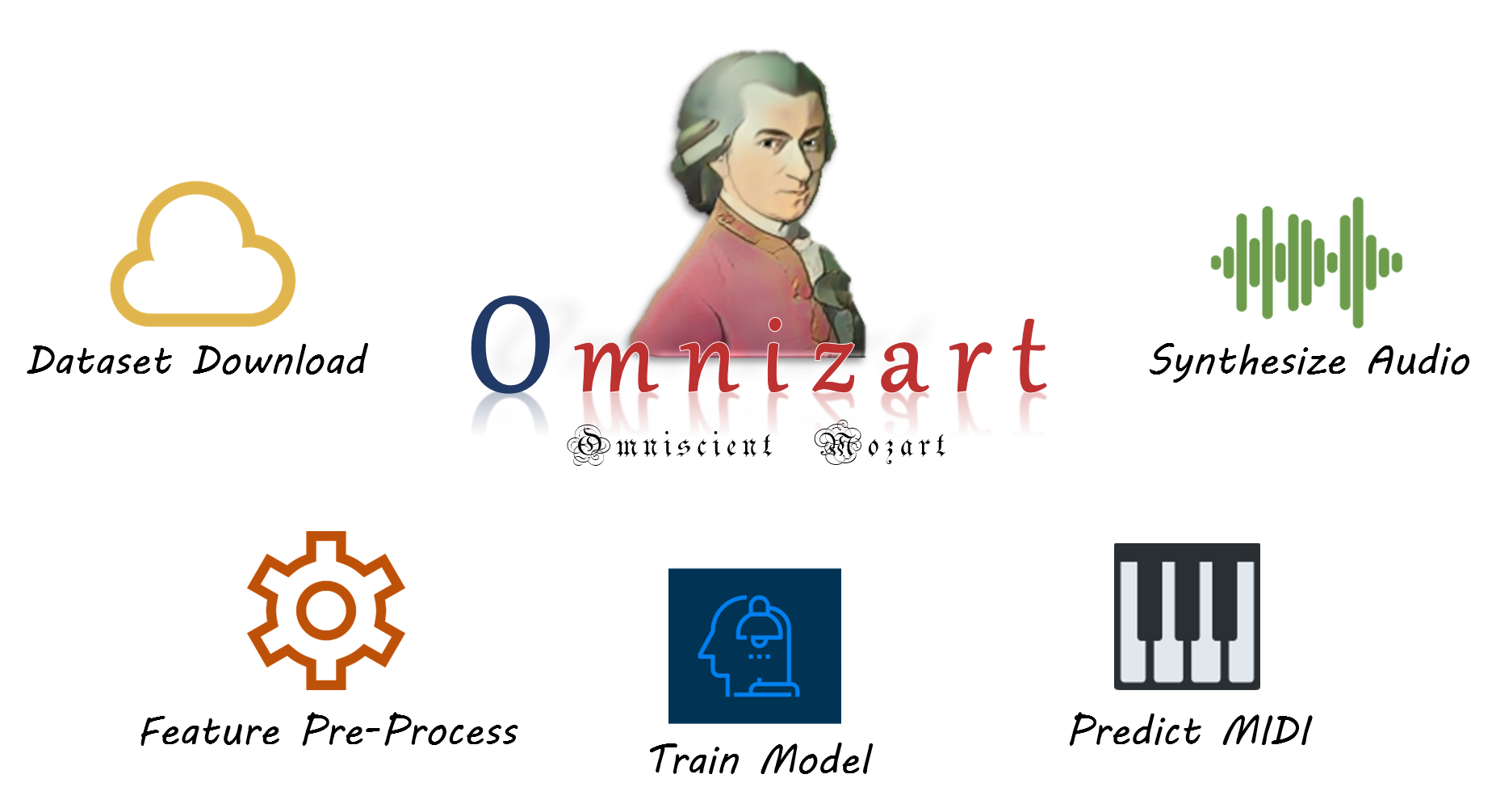

Omnizart provides the main functionalities that construct the life-cycle of deep learning-based music transcription, covering from dataset downloading, feature pre-processing, model training, to transcription and sonification. Pre-trained checkpoints are also provided for the immediate usage of transcription. The paper can be found from Journal of Open Source Software (JOSS).

Demonstration¶

Colab¶

Play with the Colab notebook to transcribe your favorite song almost immediately!

Replicate web demo¶

Transcribe music with Replicate web UI.

Sound samples¶

Original song

Chord transcription

Drum transcription

Note-level vocal transcription

Frame-level vocal transcription

Source files can be downloaded here. You can use Audacity to open the files.

Contents

Command Line Interface

References¶

- CS19

T.-P. Chen and L. Su. Harmony transformer: incorporating chord segmentation into harmony recognition. In ISMIR. 2019.

- CS20

Y.-C. Chuang and L. Su. Beat and downbeat tracking of symbolic music data using deep recurrent neural networks. In APSIPA ASC. 2020.

- HS20

J.-Y. Hsu and L. Su. Vocano: transcribing singing vocal notes in polyphonic music using source separation and semi-supervised learning. In (Under Review). 2020.

- LS18

W.-T. Lu and L. Su. Vocal melody extraction with semantic segmentation and audio-symbolic domain transfer learning. In ISMIR. 2018.

- WWS20

I.-C. Wei, C.-W. Wu, and L. Su. Improving automatic drum transcription using large-scale audio-to-midi aligned data. In ICASSP (Under Review). 2020.

- WCS20

Y.-T Wu, B. Chen, and L. Su. Multi-instrument automatic music transcription with self-attention-based instance segmentation. In TASLP. 2020.